Over the past 15 years, deep learning has emerged as the dominant force driving the AI revolution. Its key strength lies in automating feature engineering, enabling powerful end-to-end learning directly from raw data and reducing reliance on domain-specific expertise. Yet, truly automated learning pipelines remain out of reach: while manual feature design has diminished, it has been replaced by a multitude of design choices (such as architectures, optimizers, and initialization schemes) that must be carefully tuned to achieve strong performance. In this sense, domain experts have largely been replaced by deep learning experts. This group addresses this challenge by developing methods to automatically configure these critical choices. We design principled algorithms, create rigorous benchmarks for fair and efficient comparison, and build practical tools to make these advances accessible to practitioners. Our research is closely aligned with the DL 2.0 ERC consolidator grant and broader European open-science initiatives in the area of large language models.

Research Topics & Interests

Fundamental HPO

We explore core research topics in hyperparameter optimization, including black-box optimization with Bayesian Optimization, known for its sample efficiency and flexibility, user priors, which integrate human knowledge to guide optimization processes. Additionally, we investigate learning curve extrapolation and multi-fidelity optimization approaches to accelerate optimization and enable more efficient resource allocation.

HPO for large-scale Deep Learning

We aim to enable Hyperparameter Optimization for large-scale deep learning by dramatically reducing the number of full model trainings required for HPO. Our approach includes resource-efficient methods like forecasting performance from early training statistics (e.g., initial learning curve), inferring hyperparameter-aware scaling laws to predict large model behavior from smaller ones, and employing expert knowledge and meta-learning to improve sample efficiency.

HPO Benchmarks & Tools

We develop a range of high-quality benchmarks and open-source tools to advance hyperparameter optimization research. These tools are designed to make our research accessible and practical for non-experts. Additionally, our benchmarks provide a robust framework for comparison and evaluation across HPO studies, promoting scientific rigor and facilitating researchers’ work. Widely adopted in the HPO community, our tools and benchmarks remain a standard in the field.

Group Members

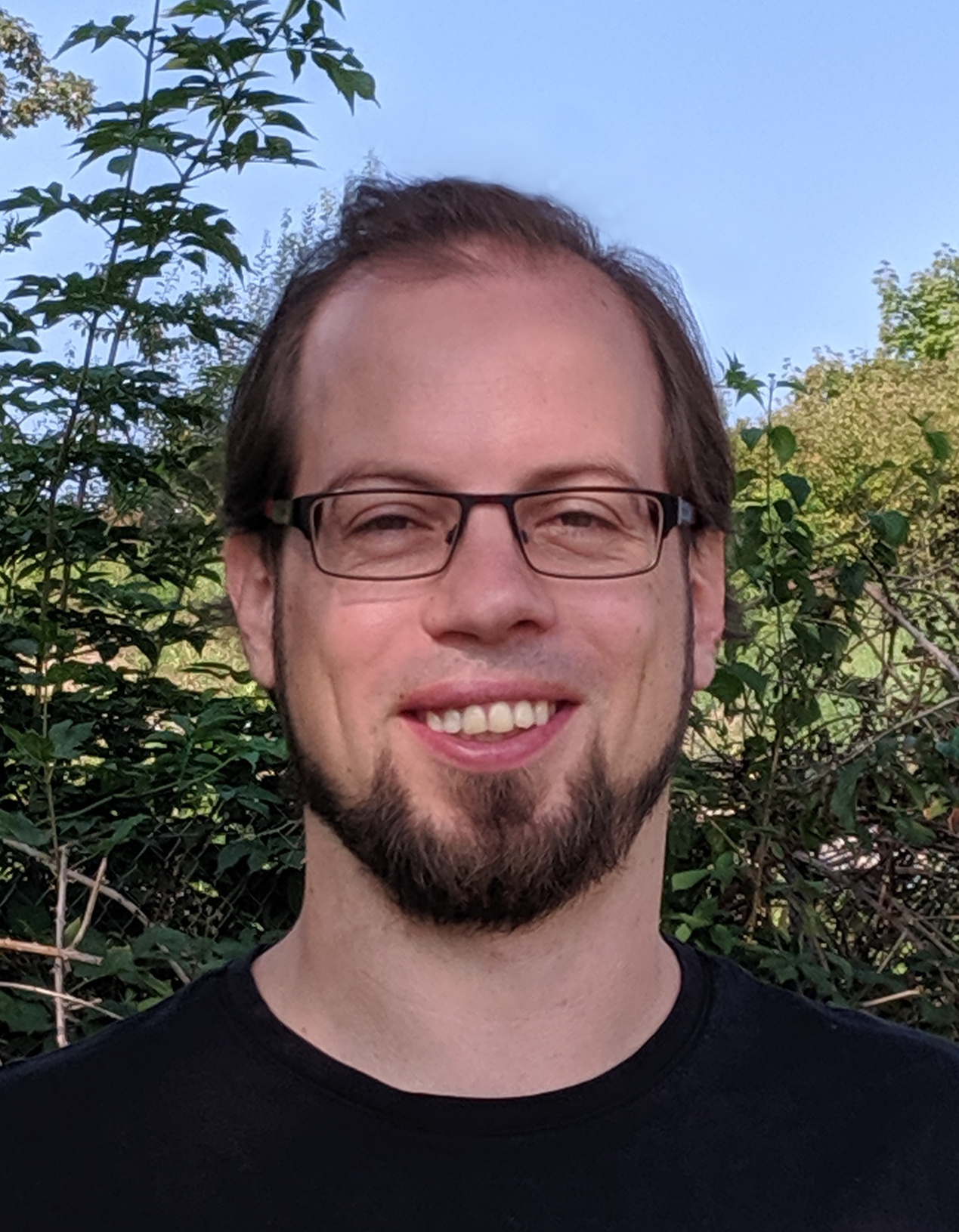

Subgroup Lead

PhD Students

Students

Alumni

Student Alumni

Theodoros Athanasiadis

Soham Basu

Samir Garibov

Shuhei Watanabe

Featured Publications

2024 |

In-Context Freeze-Thaw Bayesian Optimization for Hyperparameter Optimization Proceedings Article In: Proceedings of the 41st International Conference on Machine Learning (ICML), 2024. |

All Publications

2025 |

Cost-Sensitive Freeze-thaw Bayesian Optimization for Efficient Hyperparameter Tuning Proceedings Article In: 39th Conference on Neural Information Processing Systems (NeurIPS), 2025. |

Tune My Adam, Please! Proceedings Article In: Proceedings of the Fourth International Conference on Automated Machine Learning (AutoML), Non-Archival Track, 2025. |

Bayesian Neural Scaling Laws Extrapolation with Prior-Fitted Networks Proceedings Article In: Proceedings of the 42nd International Conference on Machine Learning (ICML), 2025. |

Frozen Layers: Memory-efficient Many-fidelity Hyperparameter Optimization Proceedings Article In: Proceedings of the Fourth International Conference on Automated Machine Learning (AutoML 2025), Main Track, 2025. |

$alpha$-PFN: In-Context Learning Entropy Search Proceedings Article In: The Frontiers in Probabilistic Inference: Sampling meets Learning (FPI) at ICLR, 2025. |

Meta-learning Population-based Methods for Reinforcement Learning Journal Article In: Transactions on Machine Learning Research, 2025, ISBN: 2835-8856. |

2024 |

Warmstarting for Scaling Language Models Proceedings Article In: NeurIPS 2024 Workshop Adaptive Foundation Models, 2024. |

LMEMs for post-hoc analysis of HPO Benchmarking Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track, 2024. |

Fast Benchmarking of Asynchronous Multi-Fidelity Optimization on Zero-Cost Benchmarks Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), ABCD Track, 2024. |

From Epoch to Sample Size: Developing New Data-driven Priors for Learning Curve Prior-Fitted Networks Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track, 2024. |

In-Context Freeze-Thaw Bayesian Optimization for Hyperparameter Optimization Proceedings Article In: Proceedings of the 41st International Conference on Machine Learning (ICML), 2024. |

2023 |

PriorBand: Practical Hyperparameter Optimization in the Age of Deep Learning Proceedings Article In: Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS), 2023. |

Efficient Bayesian Learning Curve Extrapolation using Prior-Data Fitted Networks Proceedings Article In: Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS), 2023. |

2022 |

Automated Dynamic Algorithm Configuration Journal Article In: Journal of Artificial Intelligence Research (JAIR), vol. 75, pp. 1633-1699, 2022. |

2021 |

HPOBench: A Collection of Reproducible Multi-Fidelity Benchmark Problems for HPO Proceedings Article In: Vanschoren, J.; Yeung, S. (Ed.): Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks, 2021. |

DEHB: Evolutionary Hyberband for Scalable, Robust and Efficient Hyperparameter Optimization Proceedings Article In: Proceedings of the Thirtieth International Joint Conference on Artificial Intelligence (IJCAI'21), ijcai.org, 2021. |

DACBench: A Benchmark Library for Dynamic Algorithm Configuration Proceedings Article In: Proceedings of the Thirtieth International Joint Conference on Artificial Intelligence (IJCAI'21), ijcai.org, 2021. |

2020 |

Learning Step-Size Adaptation in CMA-ES Proceedings Article In: Proceedings of the Sixteenth International Conference on Parallel Problem Solving from Nature (PPSN'20), 2020. |