2026

|

Maier, Jannis; Purucker, Lennart HAPEns: Hardware-Aware Post-Hoc Ensembling for Tabular Data Proceedings Article In: Preprint, 2026. @inproceedings{Maier2026,

title = {HAPEns: Hardware-Aware Post-Hoc Ensembling for Tabular Data},

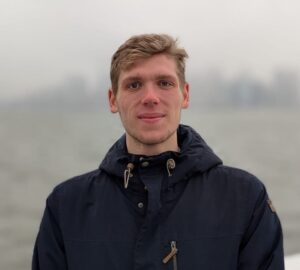

author = {Jannis Maier and Lennart Purucker},

year = {2026},

booktitle = {Preprint},

keywords = {}

}

|

Küken, Jaris; Hoo, Shi Bin; Purucker, Lennart; Hutter, Frank TimEE: Towards End-to-end Time Series Classification via In-Context Learning Proceedings Article In: ICLR 2026 Workshop: Time Series in the Age of Large Models, 2026. @inproceedings{Küken2026,

title = {TimEE: Towards End-to-end Time Series Classification via In-Context Learning},

author = {Jaris Küken and Shi Bin Hoo and Lennart Purucker and Frank Hutter},

year = {2026},

booktitle = {ICLR 2026 Workshop: Time Series in the Age of Large Models},

keywords = {}

}

|

2025

|

Erickson, Nick; Purucker, Lennart; Tschalzev, Andrej; Holzmüller, David; Desai, Prateek Mutalik; Salinas, David; Hutter, Frank TabArena: A Living Benchmark for Machine Learning on Tabular Data Proceedings Article In: NeurIPS 2025 Datasets and Benchmarks Track

, 2025, (Spotlight). @inproceedings{Erickson2025,

title = {TabArena: A Living Benchmark for Machine Learning on Tabular Data},

author = {Nick Erickson and Lennart Purucker and Andrej Tschalzev and David Holzmüller and Prateek Mutalik Desai and David Salinas and Frank Hutter},

year = {2025},

booktitle = {NeurIPS 2025 Datasets and Benchmarks Track

},

keywords = {}

}

|

Pfefferle, Alexander; Hog, Johannes; Purucker, Lennart; Hutter, Frank nanoTabPFN: A Lightweight and Educational Reimplementation of TabPFN Proceedings Article In: EurIPS 2025 Workshop: AI for Tabular Data, 2025. @inproceedings{pfefferle2025nanotabpfn,

title = {nanoTabPFN: A Lightweight and Educational Reimplementation of TabPFN},

author = {Alexander Pfefferle and Johannes Hog and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {EurIPS 2025 Workshop: AI for Tabular Data},

journal = {arXiv preprint arXiv:2511.03634},

keywords = {}

}

|

Bühler, Magnus; Purucker, Lennart; Hutter, Frank Causal Data Augmentation for Robust Fine-Tuning of Tabular Foundation Models Proceedings Article In: EurIPS 2025 Workshop: AI for Tabular Data, 2025. @inproceedings{Bühler25,

title = {Causal Data Augmentation for Robust Fine-Tuning of Tabular Foundation Models},

author = {Magnus Bühler and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {EurIPS 2025 Workshop: AI for Tabular Data},

keywords = {}

}

|

Jehle, Dominik; Purucker, Lennart; Hutter, Frank Agentic NL2SQL to Reduce Computational Costs Proceedings Article In: NeurIPS 2025 Workshop on Efficient Reasoning, 2025. @inproceedings{Jehle25,

title = {Agentic NL2SQL to Reduce Computational Costs},

author = {Dominik Jehle and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {NeurIPS 2025 Workshop on Efficient Reasoning},

keywords = {}

}

|

Swelam, Omar; Purucker, Lennart; Robertson, Jake; Raum, Hanne; Boedecker, Joschka; Hutter, Frank Does TabPFN Understand Causal Structures? Proceedings Article In: EurIPS 2025 Workshop: AI for Tabular Data, 2025. @inproceedings{Swelam25,

title = {Does TabPFN Understand Causal Structures?},

author = {Omar Swelam and Lennart Purucker and Jake Robertson and Hanne Raum and Joschka Boedecker and Frank Hutter},

year = {2025},

booktitle = {EurIPS 2025 Workshop: AI for Tabular Data},

keywords = {}

}

|

Grinsztajn, Léo; Flöge, Klemens; Key, Oscar; Birkel, Felix; Jund, Philipp; Roof, Brendan; Jäger, Benjamin; Safaric, Dominik; Alessi, Simone; Hayler, Adrian; Manium, Mihir; Yu, Rosen; Jablonski, Felix; Hoo, Shi Bin; Garg, Anurag; Robertson, Jake; Bühler, Magnus; Moroshan, Vladyslav; Purucker, Lennart; Cornu, Clara; Wehrhahn, Lilly Charlotte; Bonetto, Alessandro; Schölkopf, Bernhard; Gambhir, Sauraj; Hollmann, Noah; Hutter, Frank TabPFN-2.5: Advancing the State of the Art in Tabular Foundation Models Proceedings Article In: Techreport, 2025. @inproceedings{Grinsztajn2025,

title = {TabPFN-2.5: Advancing the State of the Art in Tabular Foundation Models},

author = {Léo Grinsztajn and Klemens Flöge and Oscar Key and Felix Birkel and Philipp Jund and Brendan Roof and Benjamin Jäger and Dominik Safaric and Simone Alessi and Adrian Hayler and Mihir Manium and Rosen Yu and Felix Jablonski and Shi Bin Hoo and Anurag Garg and Jake Robertson and Magnus Bühler and Vladyslav Moroshan and Lennart Purucker and Clara Cornu and Lilly Charlotte Wehrhahn and Alessandro Bonetto and Bernhard Schölkopf and Sauraj Gambhir and Noah Hollmann and Frank Hutter},

year = {2025},

booktitle = {Techreport},

keywords = {}

}

|

Schäfer, Bastian; Purucker, Lennart; Janowski, Maciej; Hutter, Frank How Usable is Automated Feature Engineering for Tabular Data? Proceedings Article In: Non-Archival Content Track at AutoML, 2025. @inproceedings{Schäfer2025,

title = {How Usable is Automated Feature Engineering for Tabular Data?},

author = {Bastian Schäfer and Lennart Purucker and Maciej Janowski and Frank Hutter},

year = {2025},

booktitle = {Non-Archival Content Track at AutoML},

keywords = {}

}

|

Bischl, Bernd; Casalicchio, Giuseppe; Das, Taniya; Feurer, Matthias; Fischer, Sebastian; Gijsbers, Pieter; Mukherjee, Subhaditya; Müller, Andreas C; Németh, László; Oala, Luis; Purucker, Lennart; Ravi, Sahithya; van Rijn, Jan N; Singh, Prabhant; Vanschoren, Joaquin; van der Velde, Jos; Wever, Marcel OpenML: Insights from 10 years and more than a thousand papers Proceedings Article In: Patterns, Elsevier, 2025. @inproceedings{Bischl2025,

title = {OpenML: Insights from 10 years and more than a thousand papers},

author = {Bernd Bischl and Giuseppe Casalicchio and Taniya Das and Matthias Feurer and Sebastian Fischer and Pieter Gijsbers and Subhaditya Mukherjee and Andreas C Müller and László Németh and Luis Oala and Lennart Purucker and Sahithya Ravi and Jan N van Rijn and Prabhant Singh and Joaquin Vanschoren and Jos van der Velde and Marcel Wever},

doi = {10.1016/j.patter.2025.101317},

year = {2025},

booktitle = {Patterns},

publisher = {Elsevier},

keywords = {}

}

|

Feuer, Benjamin; Purucker, Lennart; Elachqar, Oussama; Hegde, Chinmay MARVIS: Modality Adaptive Reasoning over VISualizations Proceedings Article In: Preprint, 2025. @inproceedings{Feuer2025,

title = {MARVIS: Modality Adaptive Reasoning over VISualizations},

author = {Benjamin Feuer and Lennart Purucker and Oussama Elachqar and Chinmay Hegde

},

year = {2025},

booktitle = {Preprint},

keywords = {}

}

|

Bühler, Magnus; Purucker, Lennart; Hutter, Frank Towards Synthetic Data for Fine-tuning Tabular Foundation Models Proceedings Article In: Foundation Models for Structured Data workshop at ICML, 2025. @inproceedings{Bühler2025,

title = {Towards Synthetic Data for Fine-tuning Tabular Foundation Models},

author = {Magnus Bühler and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {Foundation Models for Structured Data workshop at ICML},

keywords = {}

}

|

Mráz, Martin; Das, Breenda; Gupta, Anshul; Purucker, Lennart; Hutter, Frank Towards Benchmarking Foundation Models for Tabular Data With Text Proceedings Article In: Foundation Models for Structured Data workshop at ICML, 2025. @inproceedings{Mráz2025,

title = {Towards Benchmarking Foundation Models for Tabular Data With Text},

author = {Martin Mráz and Breenda Das and Anshul Gupta and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {Foundation Models for Structured Data workshop at ICML},

keywords = {}

}

|

Küken, Jaris; Purucker, Lennart; Hutter, Frank Early Stopping Tabular In-Context Learning Proceedings Article In: Foundation Models for Structured Data workshop at ICML, 2025. @inproceedings{Küken2025,

title = {Early Stopping Tabular In-Context Learning},

author = {Jaris Küken and Lennart Purucker and Frank Hutter},

year = {2025},

booktitle = {Foundation Models for Structured Data workshop at ICML},

keywords = {}

}

|

Garg, Anurag; Ali, Muhammad; Hollmann, Noah; Purucker, Lennart; Müller, Samuel; Hutter, Frank Real-TabPFN: Improving Tabular Foundation Models via Continued Pre-training With Real-World Data Proceedings Article In: Foundation Models for Structured Data workshop at ICML, 2025. @inproceedings{Garg2025,

title = {Real-TabPFN: Improving Tabular Foundation Models via Continued Pre-training With Real-World Data},

author = {Anurag Garg and Muhammad Ali and Noah Hollmann and Lennart Purucker and Samuel Müller and Frank Hutter},

year = {2025},

booktitle = {Foundation Models for Structured Data workshop at ICML},

keywords = {}

}

|

Arango, Sebastian Pineda; Janowski, Maciej; Purucker, Lennart; Zela, Arber; Hutter, Frank; Grabocka, Josif Regularized Neural Ensemblers Proceedings Article In: AutoML Conference 2025, 2025. @inproceedings{Arango2025,

title = {Regularized Neural Ensemblers},

author = {Sebastian Pineda Arango and Maciej Janowski and Lennart Purucker and Arber Zela and Frank Hutter and Josif Grabocka},

year = {2025},

booktitle = {AutoML Conference 2025},

journal = {Preprint arXiv},

keywords = {}

}

|

Heinzel, Carola Sophia; Purucker, Lennart; Hutter, Frank; Pfaffelhuber, Peter Advancing biogeographical ancestry predictions through machine learning Proceedings Article In: Forensic Science International: Genetics, Elsevier, 2025. @inproceedings{Heinzel2025,

title = {Advancing biogeographical ancestry predictions through machine learning},

author = {Carola Sophia Heinzel and Lennart Purucker and Frank Hutter and Peter Pfaffelhuber},

doi = {10.1016/j.fsigen.2025.103290},

year = {2025},

booktitle = {Forensic Science International: Genetics},

publisher = {Elsevier},

keywords = {}

}

|

Tschalzev, Andrej; Purucker, Lennart; Lüdtke, Stefan; Hutter, Frank; Bartelt, Christian; Stuckenschmidt, Heiner Unreflected Use of Tabular Data Repositories Can Undermine Research Quality Proceedings Article In: The Future of Machine Learning Data Practices and Repositories at ICLR, 2025, (Workshop Spotlight). @inproceedings{Tschalzev2025,

title = {Unreflected Use of Tabular Data Repositories Can Undermine Research Quality},

author = {Andrej Tschalzev and Lennart Purucker and Stefan Lüdtke and Frank Hutter and Christian Bartelt and Heiner Stuckenschmidt},

year = {2025},

booktitle = {The Future of Machine Learning Data Practices and Repositories at ICLR},

keywords = {}

}

|

Hollmann, Noah; Müller, Samuel; Purucker, Lennart; Krishnakumar, Arjun; Körfer, Max; Hoo, Shi Bin; Schirrmeister, Robin Tibor; Hutter, Frank Accurate predictions on small data with a tabular foundation model Journal Article In: Nature, vol. 637, iss. 8045, pp. 319–326, 2025, (Nature). @article{Hollmann2025,

title = {Accurate predictions on small data with a tabular foundation model},

author = {Noah Hollmann and Samuel Müller and Lennart Purucker and Arjun Krishnakumar and Max Körfer and Shi Bin Hoo and Robin Tibor Schirrmeister and Frank Hutter },

year = {2025},

journal = {Nature},

volume = {637},

pages = {319–326},

keywords = {}

}

|

2024

|

Küken, Jaris; Purucker, Lennart; Hutter, Frank Large Language Models Engineer Too Many Simple Features for Tabular Data Proceedings Article In: NeurIPS 2024 Third Table Representation Learning Workshop, 2024, (Workshop Oral). @inproceedings{Küken2024,

title = {Large Language Models Engineer Too Many Simple Features for Tabular Data},

author = {Jaris Küken and Lennart Purucker and Frank Hutter},

year = {2024},

booktitle = {NeurIPS 2024 Third Table Representation Learning Workshop},

keywords = {}

}

|

Hoo, Shi Bin; Müller, Samuel; Salinas, David; Hutter, Frank [TabPFN-TS] From Tables to Time: Extending TabPFN-v2 to Time Series Forecasting Proceedings Article In: NeurIPS 2024 TRL Workshop, 2024. @inproceedings{Hoo2024,

title = {[TabPFN-TS] From Tables to Time: Extending TabPFN-v2 to Time Series Forecasting},

author = {Shi Bin Hoo and Samuel Müller and David Salinas and Frank Hutter},

year = {2024},

booktitle = {NeurIPS 2024 TRL Workshop},

keywords = {}

}

|

Helli, Kai; Schnurr, David; Hollmann, Noah; Müller, Samuel; Hutter, Frank Drift-Resilient TabPFN: In-Context Learning Distribution Shifts on Tabular Data Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track, 2024. @inproceedings{Helli2024,

title = {Drift-Resilient TabPFN: In-Context Learning Distribution Shifts on Tabular Data},

author = {Kai Helli and David Schnurr and Noah Hollmann and Samuel Müller and Frank Hutter},

year = {2024},

booktitle = {Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track},

keywords = {}

}

|

Robertson, Jake; Hollmann, Noah; Awad, Noor; Hutter, Frank FairPFN: Transformers Can do Counterfactual Fairness Conference Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track, 2024. @conference{Robertson2024,

title = {FairPFN: Transformers Can do Counterfactual Fairness},

author = {Jake Robertson and Noah Hollmann and Noor Awad and Frank Hutter},

year = {2024},

booktitle = {Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track},

keywords = {}

}

|

Maier, Jannis; Möller, Felix; Purucker, Lennart Hardware Aware Ensemble Selection for Balancing Predictive Accuracy and Cost Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track, 2024. @inproceedings{maier2024hardware,

title = {Hardware Aware Ensemble Selection for Balancing Predictive Accuracy and Cost},

author = {Jannis Maier and Felix Möller and Lennart Purucker},

year = {2024},

booktitle = {Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Workshop Track},

keywords = {}

}

|

Salinas, David; Erickson, Nick TabRepo: A Large Scale Repository of Tabular Model Evaluations and its AutoML Applications Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), ABCD Track, 2024. @inproceedings{Salinas2024,

title = {TabRepo: A Large Scale Repository of Tabular Model Evaluations and its AutoML Applications},

author = {David Salinas and Nick Erickson},

year = {2024},

booktitle = {Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), ABCD Track},

keywords = {}

}

|

Bergman, Eddie; Purucker, Lennart; Hutter, Frank Don’t Waste Your Time: Early Stopping Cross-Validation Proceedings Article In: Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Methods Track, 2024. @inproceedings{Bergman2024,

title = {Don’t Waste Your Time: Early Stopping Cross-Validation},

author = {Eddie Bergman and Lennart Purucker and Frank Hutter },

year = {2024},

booktitle = {Proceedings of the Third International Conference on Automated Machine Learning (AutoML 2024), Methods Track},

keywords = {}

}

|

Bergman, Edward; Feurer, Matthias; Bahram, Aron; Balef, Amir Rezaei; Purucker, Lennart; Segel, Sarah; Lindauer, Marius; Hutter, Frank; Eggensperger, Katharina AMLTK: A Modular AutoML Toolkit in Python Journal Article In: Journal of Open Source Software, vol. 9, no. 100, pp. 6367, 2024. @article{bergman2024amltk,

title = {AMLTK: A Modular AutoML Toolkit in Python},

author = {Edward Bergman and Matthias Feurer and Aron Bahram and Amir Rezaei Balef and Lennart Purucker and Sarah Segel and Marius Lindauer and Frank Hutter and Katharina Eggensperger},

year = {2024},

journal = {Journal of Open Source Software},

volume = {9},

number = {100},

pages = {6367},

keywords = {}

}

|

Wegmeth, Lukas; Vente, Tobias; Purucker, Lennart Revealing the Hidden Impact of Top-N Metrics on Optimization in Recommender Systems Proceedings Article In: European Conference on Information Retrieval, pp. 140–156, Springer 2024. @inproceedings{wegmeth2024revealing,

title = {Revealing the Hidden Impact of Top-N Metrics on Optimization in Recommender Systems},

author = {Lukas Wegmeth and Tobias Vente and Lennart Purucker},

year = {2024},

booktitle = {European Conference on Information Retrieval},

pages = {140--156},

organization = {Springer},

keywords = {}

}

|

2023

|

Hollmann, Noah; Müller, Samuel; Hutter, Frank Large Language Models for Automated Data Science: Introducing CAAFE for Context-Aware Automated Feature Engineering Proceedings Article In: Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS), 2023. @inproceedings{hollmann2023caafe,

title = {Large Language Models for Automated Data Science: Introducing CAAFE for Context-Aware Automated Feature Engineering},

author = {Noah Hollmann and Samuel Müller and Frank Hutter},

year = {2023},

booktitle = {Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS)},

keywords = {}

}

|

Wegmeth, Lukas; Vente, Tobias; Purucker, Lennart; Beel, Joeran The Effect of Random Seeds for Data Splitting on Recommendation Accuracy Conference Perspectives on the Evaluation of Recommender Systems Workshop (PERSPECTIVES 2023), co-located with the 17th ACM Conference on Recommender Systems, 2023. @conference{Wegmeth2023,

title = {The Effect of Random Seeds for Data Splitting on Recommendation Accuracy},

author = {Lukas Wegmeth and Tobias Vente and Lennart Purucker and Joeran Beel},

year = {2023},

booktitle = {Perspectives on the Evaluation of Recommender Systems Workshop (PERSPECTIVES 2023), co-located with the 17th ACM Conference on Recommender Systems},

keywords = {}

}

|

Purucker, Lennart; Beel, Joeran CMA-ES for Post Hoc Ensembling in AutoML: A Great Success and Salvageable Failure Conference AutoML Conference 2023, 2023. @conference{Purucker2023,

title = {CMA-ES for Post Hoc Ensembling in AutoML: A Great Success and Salvageable Failure},

author = {Lennart Purucker and Joeran Beel},

year = {2023},

booktitle = {AutoML Conference 2023},

keywords = {}

}

|

Purucker, Lennart; Schneider, Lennart; Anastacio, Marie; Beel, Joeran; Bischl, Bernd; Hoos, Holger Q(D)O-ES: Population-based Quality (Diversity) Optimisation for Post Hoc Ensemble Selection in AutoML Conference AutoML Conference 2023, 2023. @conference{nokey,

title = {Q(D)O-ES: Population-based Quality (Diversity) Optimisation for Post Hoc Ensemble Selection in AutoML},

author = {Lennart Purucker and Lennart Schneider and Marie Anastacio and Joeran Beel and Bernd Bischl and Holger Hoos},

year = {2023},

booktitle = {AutoML Conference 2023},

journal = {AutoML Conference 2023},

keywords = {}

}

|

Hollmann, Noah; Müller, Samuel; Eggensperger, Katharina; Hutter, Frank TabPFN: A Transformer That Solves Small Tabular Classification Problems in a Second Proceedings Article In: The Eleventh International Conference on Learning Representations (ICLR), 2023, ( top-25% of accepted papers ). @inproceedings{hollmann2023tabpfn,

title = {TabPFN: A Transformer That Solves Small Tabular Classification Problems in a Second},

author = {Noah Hollmann and Samuel Müller and Katharina Eggensperger and Frank Hutter},

year = {2023},

booktitle = {The Eleventh International Conference on Learning Representations (ICLR)},

keywords = {}

}

|

2022

|

Purucker, Lennart; Stamm, Felix; Lemmerich, Florian; Beel, Joeran Estimating the Pruned Search Space Size of Subgroup Discovery Proceedings Article In: 2022 IEEE International Conference on Data Mining (ICDM), 2022. @inproceedings{Purucker2022,

title = {Estimating the Pruned Search Space Size of Subgroup Discovery},

author = {Lennart Purucker and Felix Stamm and Florian Lemmerich and Joeran Beel},

year = {2022},

booktitle = {2022 IEEE International Conference on Data Mining (ICDM)},

keywords = {}

}

|

Feurer, Matthias; Eggensperger, Katharina; Falkner, Stefan; Lindauer, Marius; Hutter, Frank Auto-Sklearn 2.0: Hands-free AutoML via Meta-Learning Journal Article In: Journal of Machine Learning Research, vol. 23, no. 261, pp. 1-61, 2022. @article{feurer-jmlr22a,

title = {Auto-Sklearn 2.0: Hands-free AutoML via Meta-Learning},

author = {Matthias Feurer and Katharina Eggensperger and Stefan Falkner and Marius Lindauer and Frank Hutter},

editor = {Marc Schoenauer},

year = {2022},

journal = {Journal of Machine Learning Research},

volume = {23},

number = {261},

pages = {1-61},

keywords = {}

}

|

Purucker, Lennart; Beel, Joeran Assembled-OpenML: Creating Efficient Benchmarks for Ensembles in AutoML with OpenML Conference First Conference on Automated Machine Learning (Late-Breaking Workshop), 2022. @conference{Purucker2022b,

title = {Assembled-OpenML: Creating Efficient Benchmarks for Ensembles in AutoML with OpenML},

author = {Lennart Purucker and Joeran Beel },

year = {2022},

booktitle = {First Conference on Automated Machine Learning (Late-Breaking Workshop)},

keywords = {}

}

|

2021

|

Bischl, Bernd; Casalicchio, Giuseppe; Feurer, Matthias; Gijsbers, Pieter; Hutter, Frank; Lang, Michel; Mantovani, Rafael G; van Rijn, Jan N; Vanschoren, Joaquin OpenML Benchmarking Suites Proceedings Article In: Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks, 2021. @inproceedings{bischl-neurips21a,

title = {OpenML Benchmarking Suites },

author = {Bernd Bischl and Giuseppe Casalicchio and Matthias Feurer and Pieter Gijsbers and Frank Hutter and Michel Lang and Rafael G Mantovani and Jan N van Rijn and Joaquin Vanschoren},

year = {2021},

booktitle = {Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks},

keywords = {}

}

|

Kadra, Arlind; Lindauer, Marius; Hutter, Frank; Grabocka, Josif Well-tuned Simple Nets Excel on Tabular Datasets Proceedings Article In: Thirty-Fifth Conference on Neural Information Processing Systems, 2021. @inproceedings{kadra2021welltuned,

title = {Well-tuned Simple Nets Excel on Tabular Datasets},

author = {Arlind Kadra and Marius Lindauer and Frank Hutter and Josif Grabocka},

year = {2021},

booktitle = {Thirty-Fifth Conference on Neural Information Processing Systems},

keywords = {}

}

|

Zimmer, Lucas; Lindauer, Marius; Hutter, Frank Auto-Pytorch: Multi-Fidelity MetaLearning for Efficient and Robust AutoDL Journal Article In: IEEE Transactions on Pattern Analysis and Machine Intelligence, pp. 1-1, 2021. @article{9382913,

title = {Auto-Pytorch: Multi-Fidelity MetaLearning for Efficient and Robust AutoDL},

author = {Lucas Zimmer and Marius Lindauer and Frank Hutter},

doi = {10.1109/TPAMI.2021.3067763},

year = {2021},

journal = {IEEE Transactions on Pattern Analysis and Machine Intelligence},

pages = {1-1},

keywords = {}

}

|

Feurer, Matthias; van Rijn, Jan N; Kadra, Arlind; Gijsbers, Pieter; Mallik, Neeratyoy; Ravi, Sahithya; Müller, Andreas; Vanschoren, Joaquin; Hutter, Frank OpenML-Python: an extensible Python API for OpenML Journal Article In: Journal of Machine Learning Research, vol. 22, no. 100, pp. 1-5, 2021. @article{feurer-jmlr21a,

title = {OpenML-Python: an extensible Python API for OpenML},

author = {Matthias Feurer and Jan N van Rijn and Arlind Kadra and Pieter Gijsbers and Neeratyoy Mallik and Sahithya Ravi and Andreas Müller and Joaquin Vanschoren and Frank Hutter},

year = {2021},

journal = {Journal of Machine Learning Research},

volume = {22},

number = {100},

pages = {1-5},

keywords = {}

}

|